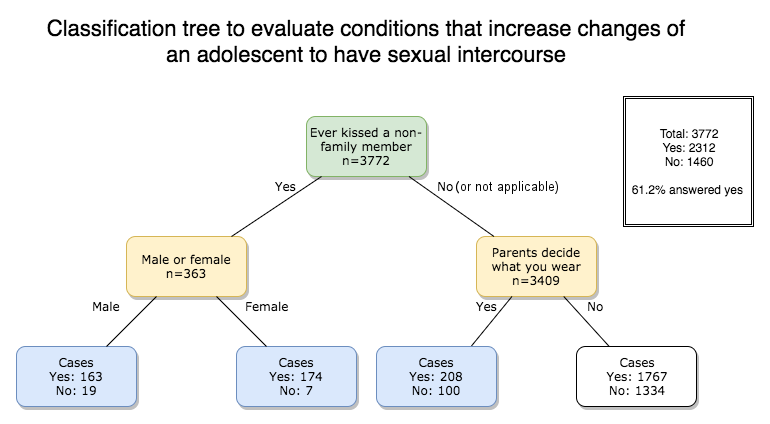

A decision tree can predict a particular target or response. The decision tree below was made by me using machine learning to test against several relationships which can be found in the National Longitudinal Study of Adolescent Health survey performed in the United States.

The syntax is provided at the end of the post.

In this example, I demonstrate using Python, a way to create a decision tree based on the following variables: which initially included variables such as

1. Gender

2. Whether parents decide what you wear

3. Whether parents decide on the people you hang out with

4. Whether parents decide on which television programs you watch

5. Whether parents decide on what you eat

6. Over the last week, if at least one parent was present during dinner

7. Closeness to mother (either biological or adoptive)

8. Whether the individual kissed a non-family member

9. Whether the individual held hands with a non-family member

The initial decision tree was too large to be included. A pruned version can be seen here.

The tree was subsequently further selectively pruned to give the final image seen at the start of the post. Three variables were selected: Sex, whether the individual kissed a non-relative and if parents made decisions on what they wear. The four boxes at the bottom represent the results. In cases where the percentage of those who have had sexual intercourse has exceeded the baseline of 61%, the boxes are highlighted in blue.

The modified dataset is provided here.

Only in one category did the baseline remain lower, at 56%. This box is coloured white, which represents individuals who have not kissed a stranger and whose parents have given them freedom in what they wear. It will be interesting to further evaluate the trust relationship between the individual and the parent(s).

The overall accuracy is about 61%. Repeating the steps will produce almost similar accuracy but you will notice that this fluctuates slightly along with the true positives, true negatives, false positives and false positives. This is further explored in the next post.

The syntax is as follows which will generate a .dot file which can then be used to create a png image or PDF either using python itself or with a simple dot command:

[code language="python"]

-- coding: UTF-8 --

from pandas import Series, DataFrame

import pandas as pd

import numpy as np

import os

import matplotlib.pylab as plt

from sklearn.cross_validation import train_test_split

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report

import sklearn.metrics

-- coding: UTF-8 --

Remember to replace file directory with the active folder

os.chdir("file directory")

"""

Data Engineering and Analysis

"""

Load the dataset

AH_data = pd.read_csv("addhealth_pds.csv")

data_clean = AH_data.dropna()

data_clean.dtypes

data_clean.describe()

"""

Modeling and Prediction

"""

Split into training and testing sets

predictors = data_clean[['BIO_SEX','H1LR2','H1WP3']]

targets = data_clean.H1CO1

pred_train, pred_test, tar_train, tar_test = train_test_split(predictors, targets, test_size=.4)

pred_train.shape

pred_test.shape

tar_train.shape

tar_test.shape

Build model on training data

classifier=DecisionTreeClassifier()

classifier=classifier.fit(pred_train,tar_train)

predictions=classifier.predict(pred_test)

sklearn.metrics.confusion_matrix(tar_test,predictions)

sklearn.metrics.accuracy_score(tar_test, predictions)

Displaying the decision tree

from sklearn import tree

from StringIO import StringIO

from io import BytesIO as StringIO

from StringIO import StringIO

from IPython.display import Image

out = StringIO()

tree.export_graphviz(classifier, out_file='out.dot')

[/code]

Subsequently, run the following command in a terminal to convert the dot file into a png:

[code language="bash"] dot -T png out.dot -o treepic.png [/code]

Pruning

Pruning can be done either by selecting the best variables manually or allowing the machine to do it for you. In this case, use the syntax (max_leaf_nodes=n) under classifier=DecisionTreeClassifier, which will generate a completely different tree like so:

[code language="python"] classifier=DecisionTreeClassifier(max_leaf_nodes=5) [/code]

After removing cases where individuals did not answer either yes or no in the question “Have you kissed a non-family member?”, the tree is now as follows:

Pingback: Seed AI: Are we there yet? How do we make one and should we? | XELLINK Solutions